DCM Tutorial – An Introduction to Orientation Kinematics

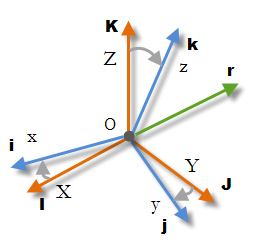

Introduction This article is a continuation of my IMU Guide, covering additional orientation kinematics topics. I will go through some theory first and then I will present a practical example …